The trusted software delivery solution for the agentic era

CloudBees Unify is the enterprise control and context plane for your entire AI-powered software delivery lifecycle: open, multi-agentic, and continuously secure.

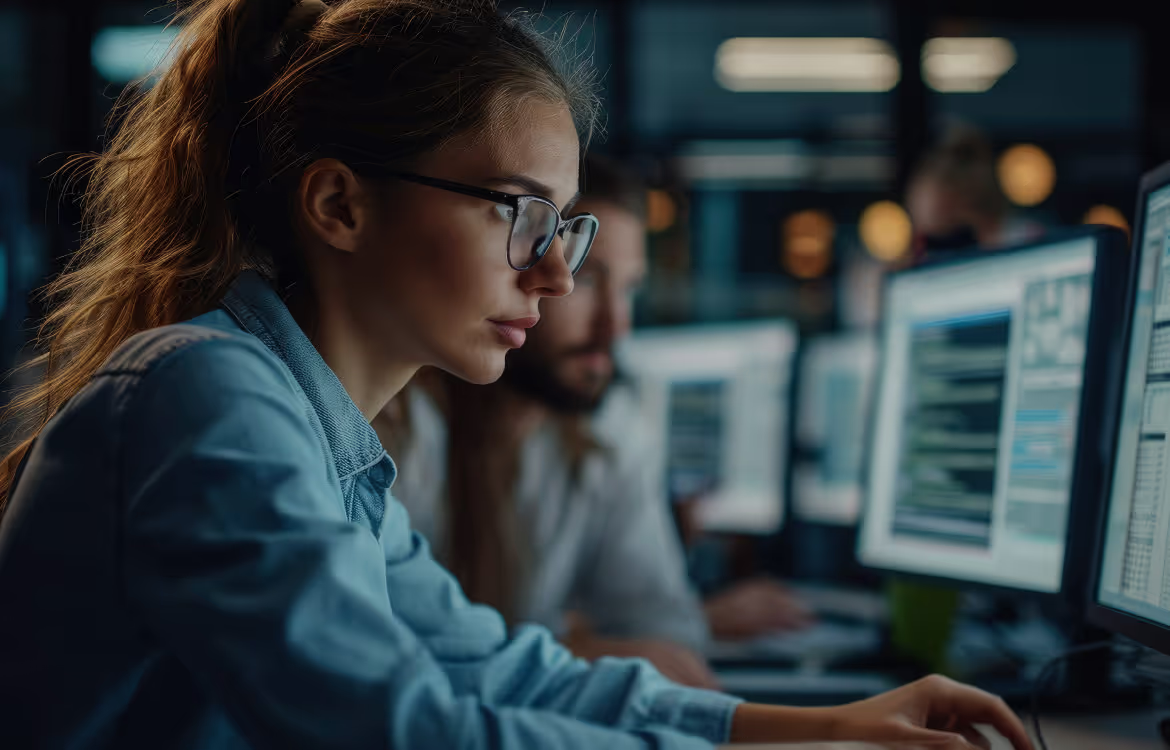

Trusted by teams delivering software at global scale

Fragmentation slows delivery, increases risk, and limits scale

Wasted engineering time

Developers spend more time managing tools, pipelines, and handoffs than building value, slowing innovation and increasing burnout.

Slower time to market

Disconnected workflows and manual release coordination slow delivery and make it harder to ship with confidence.

Increased security risk

Fragmentation creates blind spots across the SDLC, making audits difficult, governance inconsistent, and risk harder to detect and control.

“CloudBees Unify understands what many platforms miss—ripping and replacing simply doesn’t work at the enterprise level. We need solutions that complement our existing systems, not conflict with them. That’s exactly why CloudBees Unify is so compelling to us.”

CloudBees Unify is the software delivery solution for the agentic enterprise

CloudBees Unify offers trusted AI-powered capabilities to support critical delivery workflows across modernization, automation, governance, and security.

Proven and trusted results for enterprises

CloudBees Unify's agentic software delivery solution empowers enterprises by eliminating toil, accelerating delivery, and embedding continuous security, while giving their developers intelligent tools to innovate faster and with confidence.

up to

70%

Less build and development time

up to

10x

Faster to deploy and release software

up to

21k

Engineering hours saved annually

Trusted by enterprises, loved by developers

Latest blog posts

Bee Giving: Strengthening Our Culture by Serving Our Communities

OpenClaw Is a Preview of Why Governance Matters More Than Ever

CloudBees Appoints Philippe Van Hove as Chief Revenue Officer to Accelerate Growth as Company Scales its AI-powered DevOps Solution